Since we now have an image hash table, we can analyze the number of unique hashes and determine the deduplication percentage of all our images. This comes with a significant cost in the form of storage, with deduplication of bytes as a means to reduce this. One use case comes from the sheer amount of media that is stored which is comparable to most large media-based tech companies. We’ve already been leveraging the system for various use cases at Canva. Catches watermarking, quality changes, and minor modifications.Our peak workload is in excess of 2000 queries per second, with no performance degradation at that utilization, which maps to ~10k DynamoDB RCU capacity.Average query time of 40ms, p95 at 60ms.Hashes for over 10 billion images in DynamoDB.Some of the highlights of the system are: These changes reduced the number of rows returned from 100000s to 10s for all the previously problematic queries, fixing the issues that occurred on roll-out. These were easy to pick out because the hashes generally consisted of a single character like AAAAAAAA. Then we chose to skip hashing low-complexity images.After each reduction, tests were done to ensure that no relevant image matches were lost. First, the window count was reduced 4x to reduce the number of results that the system filtered.To fix these issues, two changes were made: Here’s an example of what the entries in the database could look like for a perceptual hash:Ĭolored boxes that inflated our search results to the point of unusability This solution was chosen because it is able to guarantee finding hashes for a given Hamming distance at high scale. The algorithm involves splitting the query hash and stored hashes into segments (more on this later). To do this we implemented an algorithm called multi-index hashing ( Norouzi, 2012) on top of DynamoDB tables. The ideal is to have higher precision and avoid false negatives. In addition, the system should have the right level of precision and recall. Allowing this query type needs a different data structure. The previous example cannot be used to create this system as it only supported matching if hashes are equal. The system output would be all the stored hashes within that Hamming distance. Matching perceptual hashesĪ perceptual hash matching system can use a hash and a Hamming distance as input. Perceptual hashes and Hamming distances can be used to build a system that can find modified images. If there were no modifications, their hashes would have a Hamming distance of 0. With more changes, there would be a larger Hamming distance.

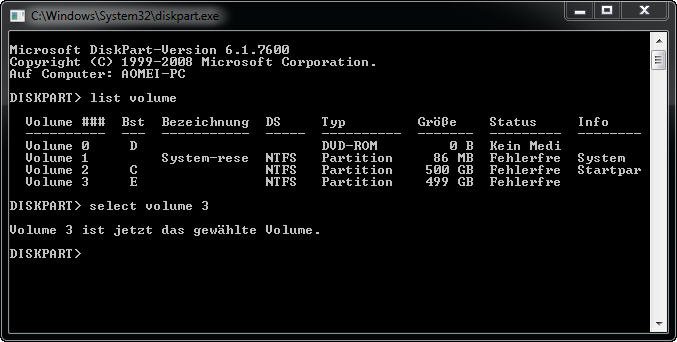

Since it’s a minimal modification, there is only a small Hamming distance of 2 between them. The original image above is modified with a watermark. In the example below, we have two visually duplicated images (IMAGE_1 and IMAGE_2) whose SHA512 hash keys are the primary keys in a DynamoDB table, and their image IDs are the secondary keys. The ability to map any file into unique hash keys is useful for matching duplicate content but is not very viable in the case of images.įor instance, you could implement a simple reverse image search in an image library using the image file hash key as a primary key in a database. Common hash algorithms include MD5 and SHA. A hash function is an algorithm that maps data to unique fixed-sized values called hash keys, which are represented as strings. Hashing is one simple way of implementing a reverse image search. This blog post describes the design and process we went through to build such a system to the scale of Canva. To cater to this, one tool we use is perceptual hashing with an internally built reverse image search system. The diversity of this media poses challenges around the moderation and reduction of unnecessary duplicate content. Canva hosts a huge collection of ever-growing, user media that needs to be stored and managed properly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed